Detection Engineering sits at the intersection of InfoSec, Cloud Infrastructure, DevOps, and Software Development. In this post, I’ll step through the thought process of a Detection Engineer in the context of collecting security data.

Detection Engineers build and deploy systems that validate security controls and detect suspicious behaviors with code. Our goal is to protect the “Crown Jewels” and prevent incidents in the organizations we serve.

Getting started as a Detection Engineer involves mapping and classifying systems (and data) by importance. Then, with this understanding, “detections” are created that flag varying degrees of behaviors by risk level.

You can’t detect what you can’t see. So let’s start with getting data.

What is “security data”?

This refers to audit logs of past behaviors, used for security monitoring. They exist in almost all systems we use every day:

$ ls /var/log

alternatives.log apache2 auth.log cloud-init-output.log dist-upgrade journal landscape lxd osquery syslog td-agent wtmp

amazon apt btmp cloud-init.log dpkg.log kern.log lastlog mail.log suricata tallylog unattended-upgrades

$ tail /var/log/auth.log

<38>1 2022-07-18T00:41:20.962241+00:00 ip-172-31-29-253 sshd 27835 - - Invalid user sftpuser from 193.106.191.150 port 44042

<38>1 2022-07-18T00:41:36.574327+00:00 ip-172-31-29-253 sshd 27835 - - Connection closed by invalid user sftpuser 193.106.191.150 port 44042 [preauth]

<38>1 2022-07-18T00:59:19.284071+00:00 ip-172-31-29-253 sshd 27915 - - Invalid user dev from 82.65.239.16 port 54074

<38>1 2022-07-18T00:59:19.377547+00:00 ip-172-31-29-253 sshd 27915 - - Received disconnect from 82.65.239.16 port 54074:11: Bye Bye [preauth]

<38>1 2022-07-18T00:59:19.377731+00:00 ip-172-31-29-253 sshd 27915 - - Disconnected from invalid user dev 82.65.239.16 port 54074 [preauth]Code language: YAML (yaml)Audit logs exist in several places in our environment, which can be broken down this way:

Each layer provides context to the overall picture, and in fact, the same behavior can span multiple layers at once.

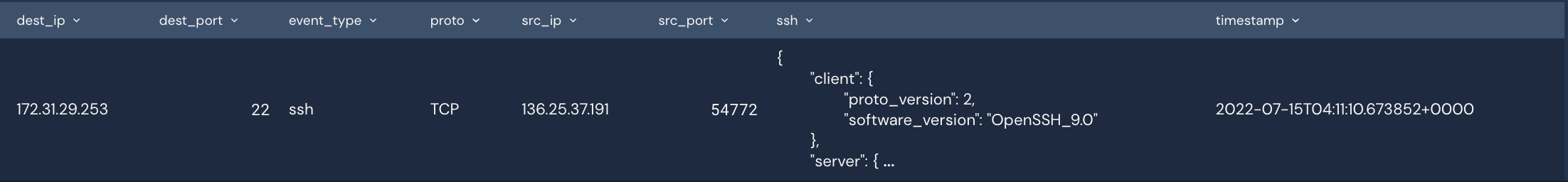

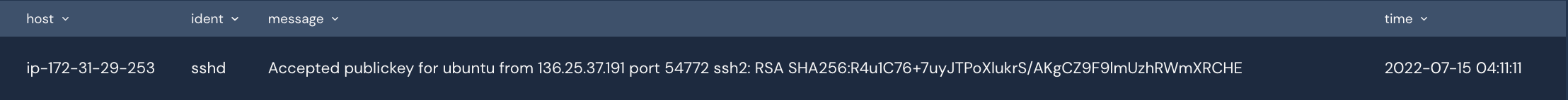

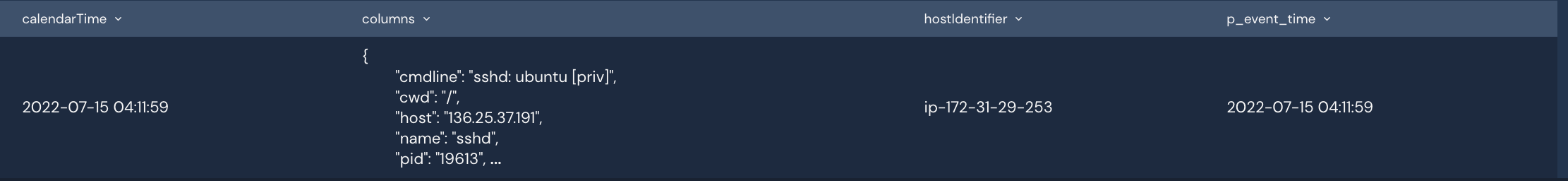

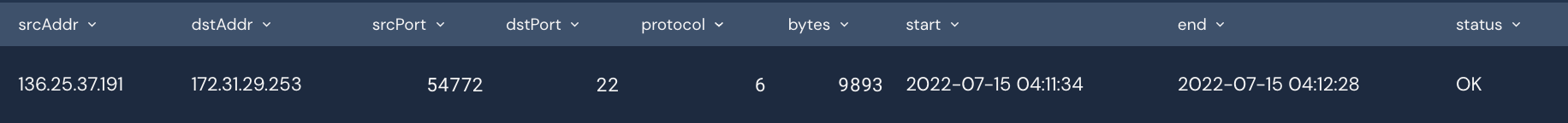

As an example, let’s look at four different logs from one SSH session (joined by time and source port)

Throughout each log, we learned different things about that session. Are all of them necessary? Definitely not. But it helps fill in information gaps along with creating redundancy in our pipeline.

Collecting security logs comes with a series of tradeoffs:

Ideally, we optimize for relevant, high-signal logs. Less is more here, especially when it comes to fast response. The more data, the slower it is to enumerate it.

For instance, I would optimize for Osquery Logs in the above example because they provide the richest data on the overall connection and enable me to analyze them in isolation with higher confidence.

Before we can create detections, data has to be centralized into the SIEM.

SIEMs are used as the brain of your security monitoring pipeline, where all data and detection logic lives.

But getting them there is non-trivial, especially in large organizations with complex cloud infrastructure. Logs are also in different formats and must be normalized in order to adequately search and analyze them.

OSS tools like Fluentd and Logstash can be great tools for getting host-based logs into cloud storage, like S3, which SIEMs can consume from. They speak multiple protocols, like Syslog and TCP, allowing for more audit log variety.

Again, always prioritize logs that can give you the best signal at the lowest latency/cost.

Operational monitoring is the last, critical part of Security Data collection.

Security Monitoring is a continuous stream of logs, and if that stops for some reason, it creates a blindspot for proactive detection and reactive response.

Metrics and alarms should always be configured for this purpose, and failover/redundancy should also ideally exist.

Getting high-quality data for security monitoring is a critical first step for any detection engineer.

It takes time to instrument all of the necessary systems, normalize that data, and make it operational. The good news is that these are typically one-time investments that payoff for the longer term of the security team and the overall posture of your organization.

With this data, we can create Detections to flag attacker behaviors and have confidence that our data will continually flow (and be on time).