The SOC Operating Model Is Changing

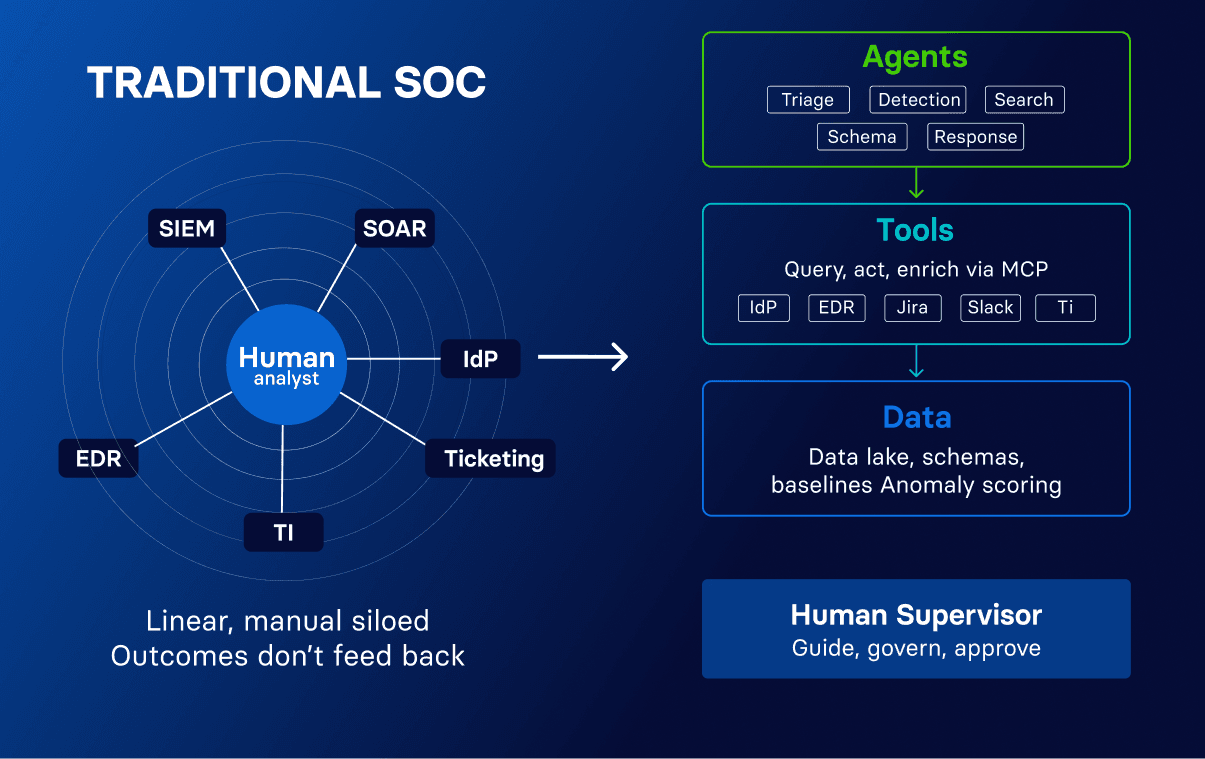

Security operations is in the middle of an operating model shift. For the past decade, the SOC has been organized around one pattern: humans do the core work, and tools support them. SIEM platforms store and search data, SOAR platforms execute playbook response actions, and threat intelligence feeds enrich alerts. In that model, the human analyst remains the protagonist.

That model is changing. AI agents are increasingly capable of taking the operational work that has consumed analyst time for years: repetitive alert triage, manual query writing and pivoting, detection tuning, and writing reports after incidents. As this shift accelerates, we believe that AI agents will become the primary workers across triage, detection, investigation, and reporting, while humans supervise the work, guide agents on organizational priorities, and make the judgment calls that require institutional context.

This transition is just getting started, but the pace is accelerating. Most enterprise security teams are somewhere in this shift, testing AI against specific workflows and gradually expanding scope and autonomy. As foundation models (like Claude, GPT, or Gemini) improve in reasoning, speed, and cost efficiencies, the work agents can reliably own will expand while human responsibility shifts further toward supervision, priority-setting, and the judgment calls that define the security program.

The architectural decisions you make now will determine how much value your security team can unlock as that balance evolves.

What Defines an AI SOC Platform?

An AI SOC platform is a security operations center (SOC) platform in which AI agents serve as the primary workers across the SOC lifecycle, handling triage, detection, investigation, and reporting within a closed-loop architecture that retains and applies learning over time. Human operators supervise the agents, set organizational policies, and govern the level of autonomy granted to the agents.

The distinction from a traditional SIEM with AI features comes down to whether the platform was designed for agents to be the primary workers, or for humans with AI assisting on the side. A platform designed for agents gives them native access to the full data set, detection logic they can read and modify, a mechanism for the team to express organizational priorities, and a feedback loop where agent outcomes improve the system. A platform designed for humans, with AI layered on afterward, constrains agents to the same narrow interfaces humans use. If the alert volume, investigation burden, and detection backlog look the same six months after deployment, the AI is accelerating the process without improving the system.

The defining characteristic of the AI SOC is that agent work feeds back into the platform, making subsequent work more effective. Triage decisions inform detection tuning. Investigation findings expand coverage. Institutional knowledge, the accumulated understanding of what is normal in an environment, gets encoded in the system rather than remaining trapped in individual expertise. Security analysts actively transfer their skills and judgment into the platform through guidance, risk criteria, and investigation playbooks, and once encoded, that expertise scales across every agent run at zero marginal cost.

Over time, the repetitive work is absorbed by agents, freeing the team to focus on threat modeling, coverage strategy, risk prioritization, and the decisions that shape the security program. And because agents operate continuously at machine speed, the SOC's ability to detect and respond changes fundamentally, and threats that would have taken a human hours to investigate are triaged, correlated, and acted upon within minutes.

Architectural Requirements

The closed loop does not emerge from any arbitrary combination of AI and security tooling. It depends on specific architectural properties that enable agents to read, reason about, and update the systems they operate within. Each requirement below is load-bearing: if any one is absent, the feedback loop breaks and the system cannot learn from its own operations.

Native access to the full security data set. An AI SOC platform requires that agents can query the full breadth of an organization's security data, not just alert metadata or recent events. Investigation and threat hunting both require correlating identity activity with infrastructure events, examining historical baselines, and understanding how a specific alert or hypothesis fits within broader behavioral patterns across log sources. The underlying data architecture needs consistent, structured schemas so that agents reason over reliable fields regardless of which source produced the event, making cross-source correlation dependable. That same structured data foundation enables statistical analysis and behavioral baselining, such as anomaly scoring across user activity or network traffic, which provides agents with quantitative signals alongside rule-based detections and ensures that investigation outcomes contain enough detail to inform improvements elsewhere in the system.

Detection logic that AI can understand, modify, and optimize. The feedback loop from triage to detection improvement requires that detection logic be expressed in a form that agents can parse, understand the intent behind, and modify. Many security platforms express detections in proprietary query languages or vendor-specific formats that become black boxes agents must work around. Detections expressed in common, Turing-complete languages provide agents with the most reliable foundation for reading, generating, and continuously optimizing detection logic without manual training in proprietary syntax. Agents also need to understand the threat model a detection addresses and how the team wants alerts handled, including risk criteria and known false positive patterns, so they can reason about what a detection means, not just what it matches.

Organizational guidance and priorities. Agents need to understand what matters most to the organization they protect. An AI SOC platform needs a mechanism for security teams to declare their organizational context in a form that agents can consume directly: which systems are crown jewels, which environments hold sensitive data, which user populations have elevated access, and what the team's risk tolerance is. At a more granular level, per-detection context specifies how specific alert types should be handled and what thresholds separate escalation from auto-resolution. This is how human judgment is encoded into agent behavior, ensuring that every triage decision and detection recommendation aligns with the organization's actual priorities.

A unified platform where outcomes flow between stages. The closed loop breaks when SOC lifecycle components are distributed across disconnected tools. When agents are tightly coupled to the underlying infrastructure, operating within the same platform that owns the data, detections, and investigation layers, they naturally have deeper access to the full operational context without going through external APIs. The platform knows how and why data was ingested, which detection logic produced an alert, and what prior triage outcomes exist for similar patterns. Overlay tools that sit on top of existing infrastructure can read data and produce summaries, but they often struggle to write changes back into detections. End-to-end ownership is what makes the feedback loop between stages real.

Context assembly beyond the security perimeter. Effective triage requires context that extends beyond the security platform, because that context directly changes impact assessment. An agent observing suspicious activity needs to know whether the system holds customer data or is an isolated development sandbox. Threat intelligence provides understanding of the adversary: the actions they take, the techniques they use, and the systems they target, which helps agents distinguish genuine threat indicators from benign operational patterns. Identity providers, HR systems, ticketing platforms, and cloud APIs all contain information a senior analyst would consult. Open integration protocols like the Model Context Protocol (MCP) enable agents to reach into adjacent systems, assembling the cross-functional awareness that experienced analysts build over years within an organization.

Response actions with human-in-the-loop. An AI SOC platform is not complete if agents can only investigate and report. Agents need to take response actions, such as revoking sessions, rotating credentials, or isolating endpoints, and interact with the broader organization to verify whether the activity was legitimate before escalating. The critical requirement is graduated autonomy governed by organizational policy. Low-risk, high-confidence actions can be fully automated. Higher-impact actions require explicit human approval. Every response action is logged with full reasoning and audit trail, and the automation boundary expands over time as the evidence base for agent reliability grows.

Governance, Policy, and Trust

An AI SOC requires governance at least as rigorous as the controls applied to human analysts. Governance operates through policies that define which alert categories are eligible for auto-resolution, what severity thresholds trigger human review, which detection changes require approval, and what escalation criteria apply. The policy layer is how security leadership translates risk appetite into constraints agents enforce consistently.

Auditability is the foundation of trust in this model. Every agent action should be logged with complete reasoning, the evidence that informed the decision, and the identity and permissions under which it executed. Data isolation is non-negotiable: customer data remains within the customer's environment, is never shared across tenants, and is never used for model training. AI inference executes within dedicated, isolated compute boundaries that enforce the same access controls as the rest of the platform. Trust in AI-driven security operations is built through architecture, not assurances.

The AI SOC Platform in Practice

The Panther platform embodies this AI SOC agent-first vision, informed by customer feedback from security teams actively navigating this transition. Panther's detections are predominantly implemented in Python, enabling agents to easily interpret detection intent and propose code changes through version-controlled workflows. The data lake is SQL queryable with AI-inferred, structured schemas at ingest time. Triage, detection, investigation, and data all live within a single platform, making the closed-loop operationally feasible. Context assembly is rapidly built through and augmented through MCP integrations, and organizational guidance is expressed through risk profiles and per-detection runbooks that shape how agents prioritize, investigate, and respond.

The results from teams running this model in production reflect what happens when the closed loop is operating. HealthEquity reduced investigation times by 90%. Tealium achieved an 85% reduction in total alert volume. A Fortune 500 fintech saw weekly alert volume decline 47% over four months. Infoblox tunes detections 70% faster. These outcomes reflect a platform where agent outcomes flow back into the system, the system retains those learnings, and the team's capacity shifts from repetitive work to the strategic decisions that shape the security program.

The teams that build on closed-loop architectures now will compound their advantage as AI capabilities advance. The ones that don't will keep patching gaps between tools that were never designed to work together.

See it in action

Most AI closes the alert. Panther closes the loop.

Share:

RESOURCES