BLOG

Tunnel Vision: Supply Chain Attack Targets Kubernetes via npm and PyPI

Alessandra

Rizzo

Introduction

The Panther Threat Research Team uncovered a coordinated supply chain attack targeting Kubernetes developers and DevOps teams. The campaign, active since April 1, 2026, uses a malicious package named kube-health-tools published simultaneously to npm and PyPI to deploy a persistent reverse tunnel implant on developer workstations and CI/CD systems. The package masquerades as a legitimate Kubernetes node health diagnostics library, a credible disguise given the package name's alignment with common Kubernetes tooling conventions. The package itself appears trivial, as the main payload is a precompiled binary disguised as a fake Nodejs addon. The package has more than 600 downloads at time of writing and the C2 remains operational.

The attack is particularly dangerous in Kubernetes environments, where developer and CI/CD machines frequently have access to cluster credentials, cloud provider tokens, and service account tokens with elevated privileges. A single compromised developer workstation in such an environment can provide an attacker with sufficient access to achieve full cluster takeover.

Campaign Overview

The campaign was first identified through our automated npm and PyPI scanning on April 1, 2026. We submitted our findings to OpenSourceMalware which verified and published to the community under record UUID 590f2727-8f34-4977-bea9-018e2c68df53.

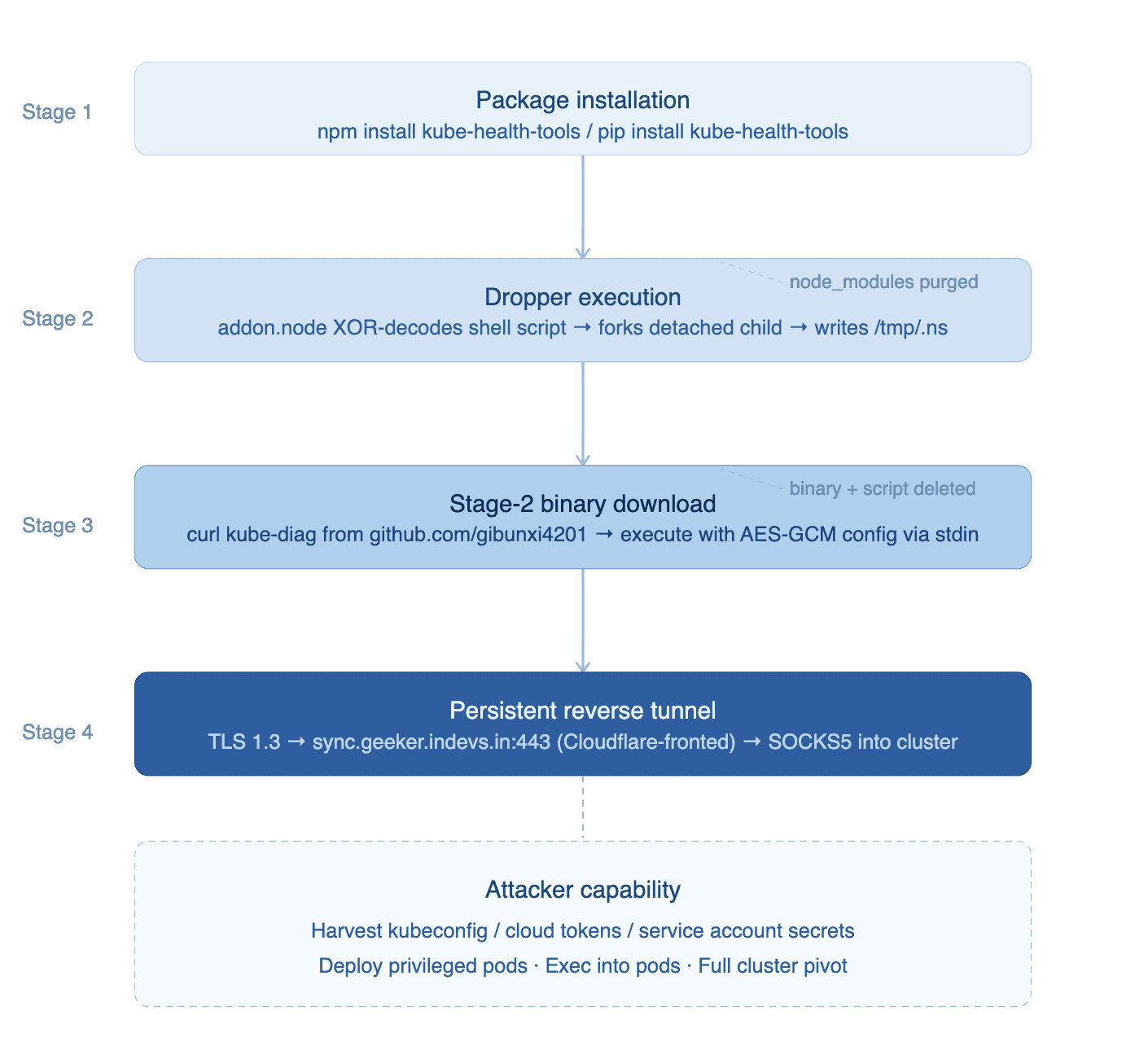

The attack proceeds in four stages:

Stage 1: Package Installation Developer or CI/CD pipeline runs npm install kube-health-tools or pip install kube-health-tools. The package's index.js or init.py silently loads the dropper at require()/import time with all errors suppressed.

Stage 2: Dropper Execution A native binary (addon.node on npm, or pure Python on PyPI) decodes an embedded XOR-obfuscated shell script and executes it in a detached child process. The parent process returns normally, providing no visible indication of compromise.

Stage 3: Stage-2 Binary Download The shell script downloads kube-diag-linux-amd64-packed from github.com/gibunxi4201/kube-node-diag and executes it with an AES-GCM encrypted configuration blob piped via stdin. The binary, the script, and — in the PyPI variant — the entire package directory are deleted within 3 seconds of launch.

Stage 4: Persistent Tunnel The stage-2 binary establishes a TLS 1.3 reverse tunnel to sync[.]geeker[.]indevs[.]in:443 (Cloudflare-fronted). The tunnel exposes a SOCKS5 reverse proxy and SSH capability into the victim's network. The process runs under the disguised name node-health-check and persists its encrypted configuration to /tmp/.nhc.enc.

Technical Analysis

npm Package: kube-health-tools

The npm package kube-health-tools was published under the npm account hhsw2015 (hhsw2015@gmail.com). Four additional versions (1.0.1, 1.0.3, 2.0.0, 2.1.0) were published on the same day, all containing equivalent malicious payloads.

Package structure:

index.js — entry point; silently loads prebuilt/addon.node

prebuilt/addon.node — malicious ELF64 dropper disguised as a Node.js native addon

package.json — clean metadata claiming to be a Kubernetes health diagnostics library

The index.js loader:

The prebuilt/addon.node binary is an ELF64 x86-64 shared object compiled with GCC 12.2.0. Although it exports napi_register_module_v1 to appear as a legitimate Node.js native addon, it contains none of the NAPI framework imports required by a real addon. It is stripped of debug symbols.

Binary properties:

SHA-256: 46ffb4bc30ab93ace713c5a928f97df9091a734b5b478ccbcfce5ad0cb27e9af

Size: 15,944 bytes

Compiler: GCC 12.2.0 (Debian Bookworm)

Linked libraries: libc.so.6 only

Stripped: Yes | PIE: No | NX: No

The napi_register_module_v1 function, reconstructed from Ghidra decompilation, implements the dropper logic:

The npm metadata contains a deliberate mismatch: the package manifest declares scripts.install as 'node-gyp rebuild', implying native source compilation, while the actual package.json omits this script entirely. This is a deception technique to pass superficial package audits.

PyPI Package: kube-health-tools

An equivalent package was simultaneously published to PyPI as kube-health-tools version 1.0.9. Unlike the npm variant which uses a precompiled binary dropper, the PyPI variant implements the dropper directly in Python using ROT-3 (Caesar cipher, -3) obfuscation.

PyPI wheel SHA-256: ab41abea82b56e7ac3b06c15fc7760f6857df6fc9b4739254b362c2bbeaf6fb7

The obfuscation decodes as follows:

The PyPI package introduces two additional capabilities not present in the npm variant:

Environment variable override: KH_CFG allows the operator to inject a custom config blob at runtime, enabling re-targeting of already-infected machines

Self-overwrite: after execution, the package rewrites kube_health/init.py with a benign stub and removes pycache, causing the package to appear clean upon post-incident inspection

Cryptographic analysis of the config blobs reveals that both the npm and PyPI configurations share an identical AES-GCM nonce (08121e5620420b161257695b0d464041). This nonce reuse is an OPSEC failure: if either plaintext is recovered, the other can be derived immediately via CT_npm XOR CT_pypi = PT_npm XOR PT_pypi. Analysis indicate the two configs differ by a single repeated two-character value, most likely a port number, at three locations in the JSON config.

Decoded Shell Script (/tmp/.ns)

The XOR-decoded shell script dropped to /tmp/.ns contains the following payload (recovered from npm addon.node .rodata at offset 0x2040, XOR key n4k8x2m6):

The self-destruction commands are notably aggressive: in addition to removing the dropper binary and script, the payload uses find to locate and delete the entire kube-health-tools directory from node_modules, ensuring that a post-incident npm list or package inspection finds no trace of the malicious package.

Stage-2 Binary: kube-diag-linux-amd64-packed

The stage-2 binary is a UPX-packed and GObfuscate obfuscated Go binary containing a Chisel reverse tunnel client rebranded as 'nhc' (Node Health Check).

Binary hashes:

SHA-256 (packed): 34c2504751a5864d69c8fe53577bd6877fceba9bd7bddac6d1cab014a47c8b86

SHA-256 (unpacked): aaa9830ff857734ffe50c351e8b62a23931419a0cb0dc032919f0517e7c7f6bc

It supports SOCKS5 reverse tunneling via the R:socks remote spec, runs as a background daemon under the disguised process name node-health-check, and stores its encrypted configuration persistently at /tmp/.nhc.enc. A PID file (nhc.pid) manages the daemon lifecycle. To complete the impersonation, the binary embeds a fake help URL pointing to the legitimate github.com/kubernetes/node-health-check project.

The binary implements a full SSH/SFTP stack in addition to the chisel tunnel, providing the operator with complete filesystem read/write/delete capability on infected hosts.

C2 Infrastructure

C2 connectivity was confirmed through sandbox execution with full network capture. The binary connected to sync[.]geeker[.]indevs[.]in:443 at +5 seconds, completed a TLS 1.3 handshake, exchanged application data (the Chisel WebSocket upgrade), and maintained a keepalive connection for the duration of the capture session. A second connection was initiated at +44 seconds after the first session closed.

The C2 hostname resolves to Cloudflare infrastructure (188[.]114[.]97[.]3, 172[.]67[.]176[.]169), enabling the use of Cloudflare as a reverse proxy to obscure the true backend server. IP-based blocking is therefore ineffective — the operator's actual server is never exposed. The sync[.]geeker[.]indevs[.]in subdomain was registered under the free indevs[.]in namespace service operated by Stackryze Domains.

Active probing of the C2 at time of analysis confirmed the server remains live. The /health endpoint returns OK and the /version endpoint returns 1.11.3, identifying the backend as a known upstream Chisel release.

The TLS certificate used is a wildcard for *.geeker[.]indevs[.]in, issued by Google Trust Services on March 8, 2026 — 24 days before the malicious packages were published.

Impact in Kubernetes Environments

In a typical Kubernetes development or CI/CD environment, the consequences of a successful infection extend well beyond the compromised host. Developer workstations and build agents routinely hold kubeconfig files granting kubectl access — often with cluster-admin privileges, along with cloud provider credentials (AWS access keys, GCP service account JSON, Azure managed identity tokens) used for cluster provisioning and image registry access.

Once the stage-2 tunnel is established, the operator's SOCKS5 reverse proxy provides full Layer 4 connectivity into the victim's network from which these credentials can be harvested and used directly. From there, the attack surface expands significantly: an attacker with kubectl access can enumerate all running workloads and secrets across namespaces (kubectl get secrets -A), extract service account tokens mounted into pods, deploy privileged containers with hostPID, hostNetwork, or hostPath volume mounts to escape to the underlying node, and laterally move to cloud provider APIs using the node's instance metadata service.

In CI/CD contexts, where the infected process may run inside a container with access to a Docker socket or a Kubernetes in-cluster service account, a single infected build job can yield persistent access to every cluster the pipeline touches.

The SFTP capability embedded in the stage-2 binary means the operator can exfiltrate arbitrary files — including the kubeconfig, .kube/config, SSH keys, .env files, and Terraform state without any additional tooling.

Because the tunnel persists across reboots via the /tmp/.nhc.enc config and relays through Cloudflare infrastructure that IP-blocking cannot disrupt, infected machines should be treated as fully compromised until rebuilt, and all credentials accessible from those machines should be rotated immediately regardless of whether active exfiltration is confirmed.

Attribution and Actor Profile

The campaign involves multiple, potentially linked, GitHub accounts:

gibunxi4201, who hosts the stage-2 binary

hhsw2015, same npm username

wowdd1, responsible for the initial commit to the kube-diag repo

Campaign Timeline

Timestamp (UTC) | Event | Detail |

|---|---|---|

2025-06-27 | Threat actor account created | GitHub handle gibunxi4201 registered |

2026-04-01 03:48Z | Stage-2 binary published | kube-node-diag v2.0 release on GitHub |

2026-04-01 08:19Z | npm package published | kube-health-tools@1.0.0 on registry.npmjs.org |

2026-04-01 08:19Z | PyPI package published | kube-health-tools@1.0.9 on pypi.org |

2026-04-01 08:21Z | Alert generated | Panther NPM scanner rated package MALICIOUS |

2026-04-01 08:58Z | Threat verified | OpenSourceMalware record 590f2727 verified |

2026-04-01 16:07Z | Sandbox analysis | Panther sandbox execution; C2 confirmed active |

2026-04-01 20:05Z | Full investigation complete | This report |

Conclusion

The kube-health-tools campaign represents a well-constructed supply chain attack specifically targeting Kubernetes practitioners. The simultaneous npm and PyPI publication maximizes reach across the ecosystem.

The dropper is engineered to leave no trace: the shell script, binary, and package directory are all deleted within seconds of execution. The AES-GCM config injection pipeline allows the operator to retarget deployed implants without touching the package itself. And the stage-2 binary is a Chisel client with process name spoofing, encrypted persistent config, and a Cloudflare-fronted C2 that survives any IP-based response.

Each stage is designed to hand off cleanly to the next while shedding forensic evidence behind it. By the time a defender notices something is wrong, the dropper is gone, the download script is gone, the binary has renamed itself node-health-check, and the only remaining artifact is an encrypted config file in /tmp. The tunnel, meanwhile, has been open for however long it took to detect. If long enough, in a Kubernetes environment, the attacker can harvest credentials, enumerate cluster resources, and establish persistence that survives removal of the original infection vector.

See it in action

Most AI closes the alert. Panther closes the loop.

Recommended Actions

Block DNS resolution of sync[.]geeker[.]indevs[.]in at organizational egress

Search for /tmp/.nhc.enc on all developer and CI/CD hosts

Hunt for processes named node-health-check not associated with known DaemonSets

Audit package-lock.json and pip freeze history for any of the malicious versions

Rotate kubeconfig credentials, cloud provider tokens, SSH keys, and CI/CD secrets on affected machines

Detection

Panther's kubernetes_rules pack provides direct coverage for the post-exploitation actions this campaign enables. The three highest-signal rules for this threat are:

Kubernetes All Secrets Dumped Across Namespaces

Once the tunnel is active, an attacker can harvest every Kubernetes Secret cluster-wide in a single API call. This rule fires on a successful cluster-scoped list against secrets by any non-system principal.

Kubernetes Privileged Pod Created

A common next step after gaining cluster access is deploying a privileged container to escape to the underlying node. This rule fires on any successful pod creation with securityContext.privileged: true outside of system namespaces.

Kubernetes DaemonSet Created

A DaemonSet schedules a pod on every node in the cluster — the canonical method for deploying a persistent implant that survives remediation of the originally infected machine.

Additional rules covering exec-into-pod, kubectl cp data exfiltration, hostPath volume mounts, dangerous Linux capabilities, host PID/network namespace abuse, service account token theft, ClusterRoleBinding to privileged roles, wildcard RBAC permissions, and Tor exit node enrichment are available in the Panther-managed detection library at github.com/panther-labs/panther-analysis/tree/develop/rules/kubernetes_rules.

IoCs

Network Indicators

Type | Value | Description |

|---|---|---|

Hostname (C2) / TLS SNI | sync[.]geeker[.]indevs[.]in | Confirmed active C2; Chisel tunnel endpoint. Also present in TLS ClientHello |

IP Address | 188[.]114[.]97[.]3 | Cloudflare CDN IP fronting C2 hostname |

IP Address | 172[.]67[.]176[.]169 | Cloudflare CDN IP fronting C2 hostname |

Port / Protocol | 443 / TLS 1.3 | Chisel WebSocket tunnel over HTTPS |

C2 version string |

| Chisel server version; returned from |

Repository | github[.]com/gibunxi4201/kube-node-diag | Stage-2 binary distribution |

Full download URL | github[.]com/gibunxi4201/kube-node-diag/releases/download/v2.0/kube-diag-linux-amd64-packed | Exact release asset URL hardcoded in dropper |

GitHub Account | gibunxi4201 | Threat actor; operator of C2 repository |

npm Registry | kube-health-tools | Malicious npm package |

PyPI Registry | kube-health-tools | Malicious PyPI package |

File Hashes

Type | Value | Description |

|---|---|---|

SHA-256 | 46ffb4bc30ab93ace713c5a928f97df9091a734b5b478ccbcfce5ad0cb27e9af | npm addon.node dropper (v1.0.0) |

SHA-256 | bab7ac4cd036502b85609efb8d7affcb03ab35d5bd11ca9ee3717600553f836c | npm addon.node dropper (v1.0.1) |

SHA-256 | 0af7358e8e53fe115064bed456c08bb809dceaea7081c2006bd42c9e007034e4 | PyPI dropper script |

SHA-256 | ab41abea82b56e7ac3b06c15fc7760f6857df6fc9b4739254b362c2bbeaf6fb7 | PyPI wheel (kube-health-tools-1.0.9) |

SHA-256 | 1170f882e74551a8d7c11b571029a3356f01516934f910cfd45b89b27edadf65 | kube-diag binary (packed) |

SHA-256 | aaa9830ff857734ffe50c351e8b62a23931419a0cb0dc032919f0517e7c7f6bc | kube-diag binary (unpacked) |

SHA-256 | 75f6b09df6839ee71ba9478626a715d1560a669c84a0eb9f442fc7ae78d0359b | kube-diag-linux-amd64 (unpacked) |

MD5 | 3bb49bcebff227165d61a8cc1fdcde84 | kube-diag binary (packed) |

Build ID | edef843e4b4493d4f513264e6253ce354c6ac9dc | ELF debug build ID (kube-diag packed) |

Build ID | fda54d675e11b84e70db4b1dd5059782da0dad42 | ELF debug build ID (addon.node) |

Host-Based Indicators

Type | Value | Description |

|---|---|---|

File path | /tmp/.nhc.enc | Persistent encrypted tunnel config |

File path | /tmp/.kh | Stage-2 binary download path (ephemeral, <3s) |

File path | /tmp/.ns | Decoded dropper script (ephemeral, <3s) |

File path | nhc.pid | Tunnel agent PID file |

Process name | node-health-check | Daemon disguise process name |

Env variable | NHC_KEY | Tunnel decryption key override (npm variant) |

Env variable | NHC_KEY_FILE | Path to decryption key file (npm variant) |

Env variable | NHC_CFG | Config blob override (npm variant) |

Env variable | KH_CFG | Config blob override (PyPI variant) |

XOR key | n4k8x2m6 | Dropper shell script obfuscation key ( |

AES-GCM nonce | 08121e5620420b161257695b0d464041 | Shared across npm and PyPI config blobs (nonce reuse) |

Package Identifiers

Type | Value | Description |

|---|---|---|

npm package | kube-health-tools@1.0.0 | Malicious version |

npm package | kube-health-tools@1.0.1 | Malicious version |

npm package | kube-health-tools@1.0.3 | Malicious version |

npm package | kube-health-tools@2.0.0 | Malicious version |

npm package | kube-health-tools@2.1.0 | Malicious version |

npm package | kube-health-tools@2.2.0 | Malicious version |

PyPI package | kube-health-tools==1.0.9 | Malicious version |

npm author | hhsw2015 / hhsw2015@gmail.com | Threat actor npm account |

Share:

RESOURCES