What is a security data lake?

A security data lake (SDL) is a centralized repository aimed at maintaining and managing all log or other data sources relevant to an organization's security posture. An SDL can ingest data from myriad sources and can integrate with other security analytics tools to provide a single place for security data to be housed, parsed, searched and utilized.

Why using a security data lake will future proof your detection program for the next 10 years

Organizations today generate more data to monitor than ever. Traditional SIEMs were not designed to handle this scale, and as a result, teams struggle to meet their data throughput needs. To derive insights from all of this data, modern security teams must centralize data across on-premise, cloud, and SaaS environments and perform advanced analytics to detect and respond to sophisticated attackers.

As data volumes and attack surfaces continue to grow exponentially year-over-year, how can small teams keep their organizations secure? The answer lies in utilizing data technology that has powered business intelligence for years - data lakes.

Security is a data problem

There are four key data-related challenges security teams must overcome to support investigations and threat detection in the cloud:

Scale: Emerging EDR and XDR solutions generate lots of rich data, but are not responsible for managing the data produced. Even if you retain data in these tools and push them to a SIEM solution, the searches take hours or days and the amount of effort required to maintain and scale is massive.

Cost: Due to excessively high license costs, teams purposefully do not collect all of the security data they need to defend against attacks. This is a huge anti-pattern that causes key signals to be missed during an investigation or breaches to go unnoticed.

Signal: As the volume of data you process grows, it becomes more difficult to filter and detect malicious activity. Traditional SIEMs lack advanced analytics capabilities or rely on restrictive languages to query and interact with the data.

Unstructured data: According to IDC, 80% of the world’s data will be unstructured by 2025. Unstructured data makes it difficult for teams to search and analyze at scale. To make matters worse, most security tools leave data normalization for the user to handle, which makes it difficult for security analysts to understand relationships between malicious indicators and events across time.

The rise of security data lakes

To overcome the challenges of scale, cost, structure, and detection capabilities, teams can take advantage of the data lake to separate storage from and compute. Most data lakes rely on serverless services like Snowflake or S3, resulting in zero overhead, better cost structures, flexibility in processing data, and extremely high scale. Additionally, standardizing on a data lake makes it trivial to join context from other parts of your IT organization for holistic context.

Security data lakes can help you centralize and store unlimited amounts of data to power investigations, analytics, threat detection, and compliance initiatives. Without centralized, easily searchable data, analysts must access logs from several sources to perform data-driven investigations. Security data lakes are designed to centralize all of your data so you can support complex use cases for security analysis including threat hunting at scale.

Advantages of a specialized data lake for security

Let’s take a closer look at the benefits a security data lake can bring to a modern security operations pipeline:

Collect and analyze security data holistically

Scale is much easier to accomplish in data lakes helping teams to normalize and make a wide variety of data types searchable. Although users have to extract, transform, and load this data into their property format, it’s a one-time investment. Once this data is normalized, it can be used for performing effective threat detection and investigations. You can collect and analyze logs from dozens of data sources including server and event logs, SaaS applications, and cloud resources to provide complete visibility across all of your enterprise data sets.

Limitless scale, faster time-to-value

To keep up with cloud-scale data demands, security teams need the ability to scale up very quickly. With a security data lake, teams can start small and expand as needed to effectively investigate security incidents across petabytes of data. By centralizing all your security data in a data lake, critical security questions can be answered in seconds or minutes, instead of hours or weeks.

Enrich large volumes of security data

Data enrichment is key to performing effective threat detection and better incident response. Security events can be enriched by adding event and non-event contextual information such as identity context (user, host, IP addresses), vulnerability context (scan reports), business context, and more. Context plays an important role in eliminating noise, which in turn helps prioritize higher risk threats.

Affordable pricing at scale

Teams purposefully hold back the collection of security data due to the high cost of SIEMs. With security data lakes, you only pay for the computing power you use, not idle time, and generate savings by allocating the right-sized compute resources for your workloads.

Building security intelligence

Data lake technology is based on ETL pipelines for coalescing data into proper formats. Teams can also utilize these same mechanisms to derive intelligence in the form of “fact tables”, which saves compute time during an investigation. For example, a “dimension table” may contain all API calls into an AWS account, but a job can pull in all of the Admin calls into a specific “fact table”, saving a huge amount of time during an investigation.

Building your security data lake with Panther

Maintaining strong security as your data volumes grow exponentially is a challenging task. Panther is a petabyte-scale security analytics platform that operationalizes massive volumes of data for teams to identify suspicious activity and prevent breaches!

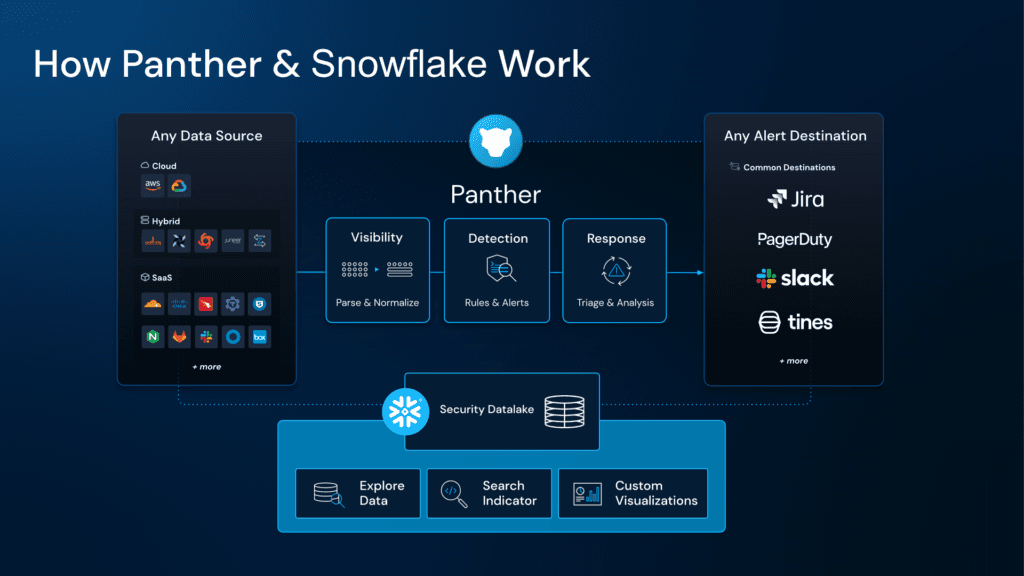

With Panther, you can collect and scale your security logs, analyze them with Python, generate alerts in real-time, and build a scalable security data lake in Snowflake. Its modular and open approach offers easy integration and flexible detections to help you build a modern security operations pipeline.

How it works

Panther collects security logs from the cloud and on-premise data sources via AWS S3, SQS, SNS, custom HTTP webhook, Google Cloud Storage, Azure Blob Storage, or native SaaS API integrations.

Panther parses, normalizes, and analyzes your data with 500+ built-in or customized Python detections.

Suspicious events are flagged and alerted upon in real-time.

Normalized data is persisted in a security data lake (Snowflake) to power threat hunting and security investigations at scale.

Using Snowflake as a security data lake

Centralizing data is one of the biggest challenges in modern security programs. Storing security data in Snowflake offers cloud-first organizations several benefits, including affordable long-term storage, massively scalable infrastructure to power investigations, and a rich ecosystem of integrations. In addition, Snowflake’s unique architecture delivers a single, seamless data experience across multiple clouds and regions, which helps teams build detection programs in scenarios where data sources are highly distributed.

With Panther, any security team can quickly bootstrap Snowflake as their security data lake for effective threat detection and response. Together, Snowflake and Panther can help organizations build a data-driven security program and achieve better security at scale with agility, cost efficiency, and end-to-end visibility.

Watch our on-demand webinar to learn how to leverage Panther and Snowflake to deliver a modern and scalable detection and response program.

Get started

Don’t let your traditional SIEM slow you down! Learn how to secure your cloud, network, applications, and endpoints with Panther. Request a personalized demo today.